## ChatGPT Launches Specialized Health Interface to Protect User Privacy

Acknowledging the widespread practice of seeking medical information online, OpenAI is introducing a specialized environment within ChatGPT to manage sensitive user inquiries. The necessity for this dedicated feature is clear, given that the platform currently fields health and wellness questions from over 230 million unique users every single week.

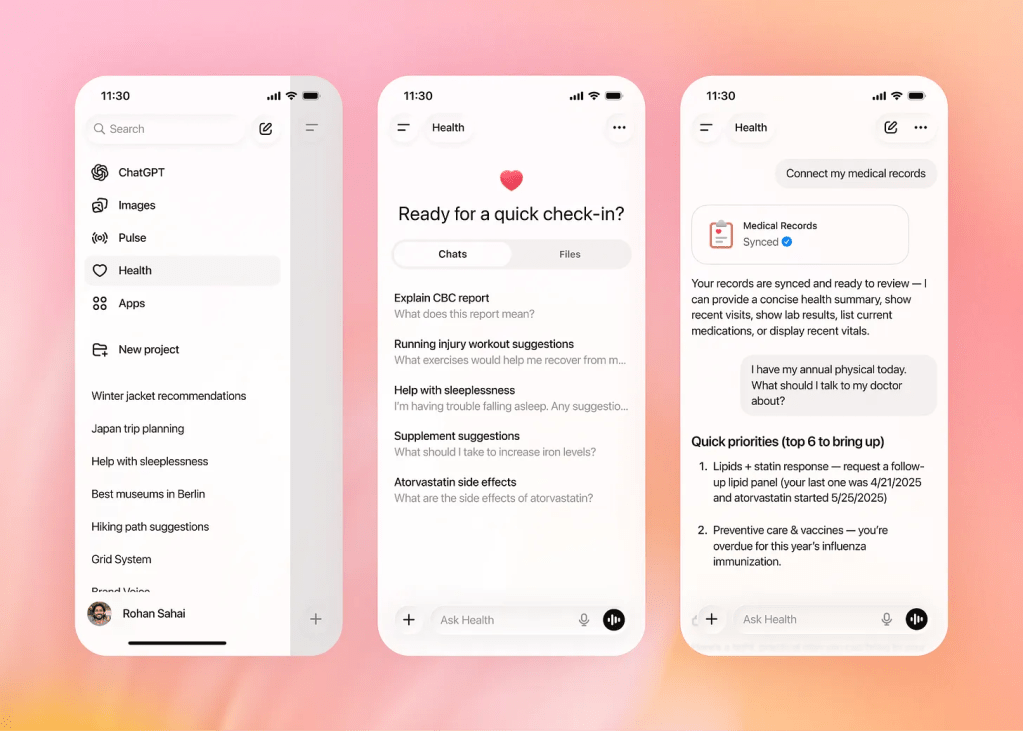

This new initiative, tentatively dubbed ChatGPT Health, is fundamentally designed to compartmentalize sensitive discussions. By isolating health-related chats from the standard user history, the system ensures that the context of a person’s medical or wellness information remains separate and will not inadvertently surface during general conversations with the AI.

To maintain these critical privacy boundaries, the architecture includes proactive guidance. Should a user initiate a discussion about medical issues outside of the dedicated Health section, the AI is programmed to gently redirect them, prompting a seamless switch to the protected interface.

However, the specialized environment can draw upon relevant background data gathered during standard usage. If a user has previously leveraged ChatGPT to help structure a marathon training regime, for example, the Health feature will maintain this institutional knowledge, recognizing the user as a runner when they discuss specific fitness goals or potential injuries.

A key utility of the new health interface involves its potential for seamless integration with external wellness tracking ecosystems. The product is expected to interface directly with personal data and medical records stored in popular applications like Apple Health, Function, and MyFitnessPal.

Regarding data security, OpenAI has provided a firm commitment: all conversations conducted within the specialized Health section will be strictly excluded from the datasets used to train or refine the underlying AI models. This commitment aims to reassure users about the protection of their most private data.

It is important to note that the company maintains explicit legal boundaries around the feature's scope. OpenAI’s terms of service continue to clearly state that the technology is **not intended for use in the diagnosis or treatment of any health condition**, reiterating that the tool is a supplementary resource, not a substitute for professional medical advice.

This highly anticipated feature is scheduled to roll out to users in the coming weeks.

International