The global financial landscape is currently navigating a dual transformation, defined by a significant pivot in monetary leadership and a fundamental maturation of the artificial intelligence sector. President Trump’s nomination of Kevin Warsh to lead the Federal Reserve has introduced a new variable into market calculations, sparking a recalibration across asset classes. As Wall Street processed the implications of a Warsh-led central bank, equity markets experienced a notable pullback while the recent momentum in precious metals began to unwind, despite the broader backdrop of a positive performance for the month of January. This volatility serves as a precursor to a deeper structural shift occurring within the technology sector, where the focus is rapidly moving from the novelty of generative capabilities to the grueling realities of industrial-scale deployment.

As the AI industry transitions from an era of experimental model expansion to one of mass-market production, the metrics of success are being redefined by the rigid constraints of physical infrastructure and unit economics. Natalie Hwang, founding managing partner of Apeira Capital, suggests that the sector has entered a critical phase where the sheer size of a large language model is no longer the primary determinant of competitive advantage. Instead, the strategic frontier has moved toward the economics of running intelligence at scale. While initial investment cycles were dominated by the episodic and front-loaded costs of model training, the current trajectory places a premium on inference—the continuous operating expense associated with live usage. For enterprises targeting global deployment, the focus has shifted from headline performance to the granular optimization of cost per inference, power efficiency, and system utilization.

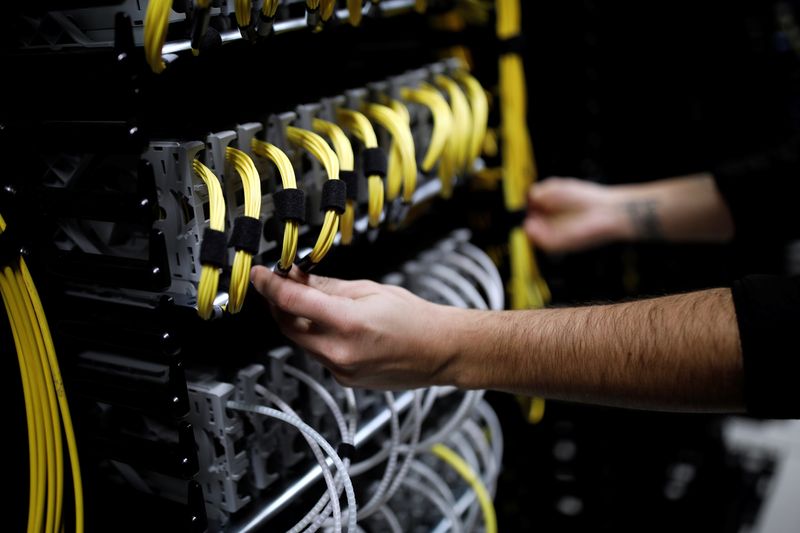

This transition highlights a growing divergence between companies that prioritized rapid scaling and those designing for sustainable operational viability. Hwang notes that organizations tethered to inefficient deployment architectures risk seeing their operating expenditures outpace revenue growth, creating a precarious financial profile. Conversely, firms that integrated inference economics into their foundational strategies—optimizing for latency and the total cost of ownership—are significantly better positioned to achieve defensible scale. This industrialization process is increasingly dictated by the availability of power, which has emerged as a primary determinant for infrastructure location. Proximity to robust energy grids is now arguably more vital than traditional factors like network connectivity or real estate costs, as regions burdened by constrained utilities or protracted permitting processes face structural headwinds.

Ultimately, the next phase of the AI gold rush will likely favor those who treat artificial intelligence not merely as a feature, but as a comprehensive operating system designed around real-world deployment constraints. The winners in this reshuffled capital allocation landscape will be the entities capable of maintaining predictable performance under rigorous conditions while navigating the physical limits of energy and hardware. As these forces continue to mature, they represent a fundamental reorganization of competitive advantage that has yet to be fully reflected in current market expectations, signaling a sophisticated evolution in how investors must evaluate the long-term value of the intelligence economy.