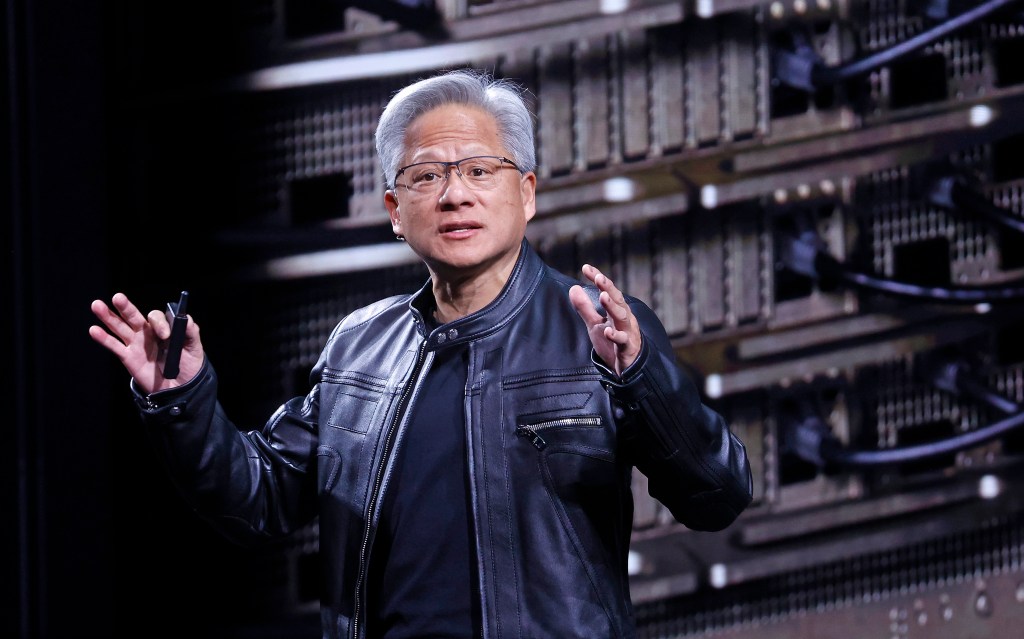

“Vera Rubin is designed to address this fundamental challenge that we have: The amount of computation necessary for AI is skyrocketing.” Huang told the audience. “Today, I can tell you that Vera Rubin is in full production.”

Explaining the benefits of the new storage, Nvidia’s senior director of AI infrastructure solutions Dion Harris pointed to the growing cache-related memory demands of modern AI systems.

As expected, the new architecture also represents a significant advance in speed and power efficiency. According to Nvidia’s tests, the Rubin architecture will operate three and a half times faster than the previous Blackwell architecture on model-training tasks and five times faster on inference tasks, reaching as high as 50 petaflops. The new platform will also support eight times more inference compute per watt.

International